GPULab Client CLI¶

Prerequisites¶

An Account

To use GPULab, you need an account on either:

- The imec Testbed Portal (for IDLab members)

- The Fed4FIRE Portal.

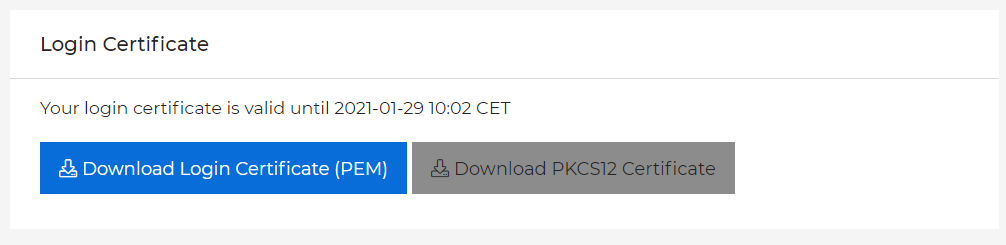

After signing up, you need to download your Login Certificate (PEM), which you can find on the bottom of the Profile-page of your portal.

Python

To run the CLI, you need pip for python3 to install the gpulab-client. To install it on Debian/Ubuntu, try:

sudo apt-get install python3-pip

Make sure you have at least Python 3.4. You can check with:

python3 --version

Tip: Using pyenv to install GPULab in a separate environment

If your Linux distribution does not have a recent enough Python, try using pyenv which works on (almost) any Linux:

curl -L https://raw.githubusercontent.com/pyenv/pyenv-installer/master/bin/pyenv-installer | bash

pyenv update

#optional on debian: apt-get install libbz2-dev libreadline-dev libsqlite3-dev

pyenv install 3.6.2

pyenv local 3.6.2

pyenv versions

python3 --version

The last command should show that you now have a recent enough python version.

(Note: If you install python locally using this method, you do not need

to add sudo in front of the installation command in the next section.)

Installation¶

To install, run:

pip3 install imecilabt-gpulab-cli

The python “pip” system will take care of all details. You will end up with a local install of gpulab-cli

GPULab requires python >= 3.7, which is default in ubuntu from 19.04

Please report any bugs to: gpulab@ilabt.imec.be . Include your username, project and a relevant job-id if possible.

Basic CLI usage¶

After installation, the gpulab-cli command is available:

Usage: gpulab-cli [OPTIONS] COMMAND [ARGS]...

GPULab client version 3.2.0

This is the general help. For help with a specific command, try:

gpulab-cli <command> --help

Send bugreports, questions and feedback to: gpulab@ilabt.imec.be

Documentation: https://doc.ilabt.imec.be/ilabt/gpulab/

Overview page: https://gpulab.ilabt.imec.be/

Overview page (for --dev): https://dev.gpulab.ilabt.imec.be/

Options:

--cert PATH Login certificate [required]

-p, --password TEXT Password associated with the login certificate

--dev Use the GPULab staging environment (this option is

only kept for backward compatibility. It was

renamed to --staging)

--staging Use the GPULab staging environment

--stable Use the GPULab production environment (this option

is only kept for backward compatibility. It was

renamed to --production)

--production Use the GPULab production environment (default)

--custom-master-url TEXT Use a custom URL as GPULab master

--debug Some extra debugging output

--servercert PATH The file containing the servers (self-signed)

certificate. Only required when the server uses a

self signed certificate.

--version Show the version and exit.

-h, --help Show this message and exit.

Commands:

bugreport Get context info for including in a bug report

cancel Cancel running job

clusters Retrieve info about the available clusters. If a cluster_id is

specified, detailed info about the slaves of that cluster is

shown.

convert Convert a Job to Job2 format

debug Retrieve a job's debug info. (Do not rely on the presence or

format of this info. It will never be stable between versions.

If this has the only source of info you need, ask the

developers to expose that info in a different way!)

hold Hold queued job(s). Status will change from QUEUED to ONHOLD

interactive Request an "interactive" job.

jobs Get info about one or more jobs

log Retrieve a job's log

release Release held job(s). Status will change from ONHOLD to QUEUED

rm Remove job

sftp Access files in a Job's container using SFTP.

ssh Log in to a Job's container using SSH.

submit Submit a job request

wait Wait for a job to change state

To get a list of currently running jobs:

$ gpulab-cli --cert /home/me/my_wall2_login.pem jobs

TASK ID NAME COMMAND CREATED USER PROJECT STATUS

7eaec798-ac49-11e9-93a1-cfd87533270a JupyterHub-singleuse *** 2019-07-22T08:25:26+02:00 pbonte Orca RUNNING

ca38f0c4-ac23-11e9-93a1-1757063cb6eb JupyterHub-singleuse *** 2019-07-22T03:55:32+02:00 ykuno DeepBeamforming RUNNING

da112f92-ac1f-11e9-93a1-cbc72e039c23 JupyterHub-singleuse *** 2019-07-22T03:27:21+02:00 ykuno DeepBeamforming CANCELLED

Recommendation

The GPULab CLI also supports getting your login certificate information from an environment variable.

This allows you to omit the --cert argument from all gpulab-cli commands.

export GPULAB_CERT='/home/me/my_wall2_login.pem'

If you append this exports to ~/.bashrc you’ll never have to type the password filename again!

To same command to get a list of currently running jobs is now much shorter:

gpulab-cli jobs

Note

Using the CLI without password

You can use the CLI without password. Be aware that this lowers security.

You need to install openssl to execute the commands below. On

Debian, try:

sudo apt-get install openssl

The password is “stored” in the PEM file, because it is used to encrypt

the private RSA key inside the PEM file. You can decrypt the RSA key and

store it, to remove the password. Below, we assume that your (password

protected) wall2 PEM file is in my_wall2_login.pem. The commands

will create the file my_wall2_login_decrypted.pem which will not be

password protected.

Use these commands:

openssl rsa -in my_wall2_login.pem > my_wall2_login_decrypted.pem

sed -ne '/-----BEGIN CERTIFICATE-----/,/-----END CERTIFICATE-----/p' < my_wall2_login.pem >> my_wall2_login_decrypted.pem

(The first command will ask your password, the second won’t)

Submitting a GPULab Job¶

A GPULab job is defined by a JSON job definition, which looks as follows:

{

"name": "helloworld",

"description": "Hello World!",

"request": {

"resources": {

"cpus": 2,

"gpus": 1,

"cpuMemoryGb": 2,

"clusterId": 4

},

"docker": {

"image": "debian:stable",

"command": "nvidia-smi"

},

"scheduling": {

"interactive": true

}

}

}

To submit the job, you’ll have to specify the name of the project on the wall2 authority in which you want it to run. The command is:

$ gpulab-cli submit --project=myproject < my-first-jobRequest.json

78125766-0b45-11e8-be1c-0fbd357c0b05

A hash representing the job ID is returned.

Getting information on a running job¶

You can query the status of this job using (an unique prefix of) the job ID:

$ gpulab-cli jobs 7812

Job ID: 78125766-0b45-11e8-be1c-0fbd357c0b05

Name: helloworld

Project: fed4fire

Username: wvdemeer

Docker image: debian:stable

Command: nvidia-smi

Status: FINISHED

Created: 2018-02-06T13:56:02-07:00

Worker ID: -

Worker hostname: 192.168.0.1

Started: 2018-02-06T14:09:44-07:00

Duration: 1 second

Finished: 2018-02-06T14:09:45-07:00

Deadline: 2018-02-07T00:09:44-07:00

Getting logs of a job¶

You can view the command line output of the job using log:

$ gpulab-cli log 7812

2018-02-06T14:09:45.185400608Z

2018-02-06T14:09:45.185451167Z ==============NVSMI LOG==============

2018-02-06T14:09:45.185459009Z

2018-02-06T14:09:45.185466771Z Timestamp : Tue Feb 6 14:09:45 2018

2018-02-06T14:09:45.185471972Z Driver Version : 390.12

2018-02-06T14:09:45.185477068Z

2018-02-06T14:09:45.185490896Z Attached GPUs : 1

2018-02-06T14:09:45.185612743Z GPU 00000000:02:00.0

2018-02-06T14:09:45.185713708Z Product Name : GeForce GTX 580

2018-02-06T14:09:45.186226030Z Product Brand : GeForc

...

Getting the GPULab event log of a job¶

You can view the internal event log of GPULab. This is mostly useful for debugging purposes. It can contain error messages thrown by the GPULab code which allow you to find the error in your job request, or to submit a bugreport.

$ gpulab-cli debug ffe249

2019-07-22T11:13:41+02:00 STATE CHANGED -> QUEUED

2019-07-22T11:13:45+02:00 STATE CHANGED -> STARTING

2019-07-22T11:13:45+02:00 LOG INFO: Running job on cluster 5 host slave5a (slave5a.wall2.ilabt.iminds.be) worker 0

2019-07-22T11:13:46+02:00 LOG INFO: Fetching latest version of image 'jupyter/minimal-notebook:latest' (no auth)

2019-07-22T11:13:48+02:00 LOG INFO: Fetched image 'jupyter/minimal-notebook:latest' with hash sha256:37c4f3f362331d1107fcd8ea8d16a8b6b8171ad437e8b933bd0d35bd98dea718

2019-07-22T11:13:48+02:00 LOG INFO: Launching JupyterHub-singleuser (image jupyter/minimal-notebook:latest with command ['start-notebook.sh', '--notebook-dir=/project/', '--NotebookApp.default_url=/lab']

2019-07-22T11:13:48+02:00 LOG DEBUG: OK: Project dir "/data/twalcari-test" exists on slave5a-f2333333333333333333@stable-cluster5

2019-07-22T11:13:48+02:00 LOG DEBUG: OK: Project dir "/data/twalcari-test" exists on slave5a-f2333333333333333333@stable-cluster5

2019-07-22T11:13:48+02:00 LOG DEBUG: Mem_limit=2048m (job requested 2048m)

2019-07-22T11:13:48+02:00 LOG DEBUG: CPU ID restriction: None

2019-07-22T11:13:48+02:00 LOG DEBUG: Successfully setup SSH pubkey access step 1. ssh_username=D5MTPCUG

2019-07-22T11:13:49+02:00 LOG INFO: Started container 577de083df9fce36fef3a90573faa27b053641b39dc2421833986463831b385d

2019-07-22T11:13:49+02:00 LOG DEBUG: port_mapping_info={"8888/tcp": [{"HostIp": "0.0.0.0", "HostPort": "32851"}]}

2019-07-22T11:13:49+02:00 LOG DEBUG: ReadLogsThread for stdout stderr started

2019-07-22T11:13:49+02:00 LOG DEBUG: Successfully setup SSH pubkey access step 2. ssh_username=D5MTPCUG

2019-07-22T11:13:49+02:00 LOG DEBUG: Job run_details have been updated

2019-07-22T11:13:49+02:00 STATE CHANGED -> RUNNING

2019-07-22T11:14:08+02:00 LOG DEBUG: Docker container status is now "running". Job status is "JobState.STARTING".

2019-07-22T11:14:27+02:00 LOG DEBUG: Docker container status is now "running". Job status is "JobState.RUNNING".

Getting console access to a GPULab job¶

You can gain console access to a job by using the ssh-command of the gpulab-cli. GPULab emulates SSH-support on a

Docker container by starting a Bash-shell via docker exec, and piping the input/output over the SSH-connection.

Because of this, GPULab SSH doesn’t support SCP, tunnels and other advanced SSH-features.

SFTP is supported though.

Authentication for this SSH connection is done via the private key embedded in your login certificate.

Example:

$ gpulab-cli ssh fe8768

To see which command is executed underlyingly, use the --show flag of gpulab-cli ssh:

$ gpulab-cli ssh fe8768f5-d270-4ab7-a416-afa28c86511c --show

Short: ssh gpulab-fe8768f5

Full: ssh -i /home/thijs/.ssh/gpulab_cert.pem -oPort=22 5MVJPCLV@n083-09.wall2.ilabt.iminds.be

This can help you to adapt these commands as needed. For example, to use rsync on GPULab.

On connectivity

The GPULab-slaves have no public IPv4 addresses. To access your job over SSH, you need either IPv6 connectivity, or you must connect to the IDLab VPN to access them via their private IPv4 address.

GPULab will automatically try to use a proxy if you have no IPv6 connectivity.

Note

You should only use this function for debugging and developing your jobs. Generally speaking jobs should be able to run without manual intervention. To achieve this, you can setup the environment for your job by creating a custom Docker container and/or by running a startup script.

SSH shorthands: ssh gpulab-<short_job-id> and ssh gpulab-<short_job-id>-proxy

The GPULab CLI automatically configures shorthand hostnames for you in the file ~/.ssh/gpulab_hosts_config. This

allows you to use ssh gpulab-ffe249 instead of gpulab-cli ssh ffe249, and ssh gpulab-ffe249-proxy instead

of gpulab-cli ssh --proxy ffe249.

This enables easy use of tools like rsync and ansible which use SSH themselves!

The GPULab CLI updates the ~/.ssh/gpulab_hosts_config every time it receives information on one or more jobs that

you are currenlty running (eg. every time you call gpulab-cli jobs, gpulab-cli ssh or gpulab-cli sftp)

You can safely remove ~/.ssh/gpulab_hosts_config file, and the Include gpulab_hosts_config-line in

~/.ssh/config if you want. GPULab will automatically recreate them if needed.

Accessing files using SFTP¶

You can access (read/write) files in the job’s container using SFTP. This includes files in the root filesystem, and

files in the mounted project dirs. The GPULab CLI offers built-in support for several SFTP GUI’s, and the sftp cli,

and handles the SSH proxy for you if needed.

Authentication for the SSH session underlying the SFTP connection is done via the private key embedded in your login certificate. Take this into account when configuring your SFTP-client: you must select ‘private key authentication’ and select your login certificate as private key.

Example:

$ gpulab-cli sftp ffe249

This will ask which SFTP client to start (if multiple are available). You can also ask for a specific SFTP client:

$ gpulab-cli sftp ffe249 --filezilla

$ gpulab-cli sftp ffe249 --sftp

$ gpulab-cli sftp ffe249 --gftp

FileZilla is a popular cross platform FTP GUI.

gFTP is a simple linux FTP GUI.

sftp is the default command line sftp client (part of openssh).

To understand how these commands work, you can use the --show flag of gpulab-cli sftp, which shows the commands

which are executed underlyingly. Note that the SSH shorthands are used to make them easy to

read and use. This also allows you to adapt these commands, for example when you want to use your own favorite file manager

(for example GNOME Files (previously known as Nautilus) or

KDE Konqueror) to access the files using SFTP.

$ gpulab-cli sftp --gftp --show 95925d5f

gftp ssh2://MGNO5Y6P@gpulab-95925d5f-proxy:22

$ gpulab-cli sftp --filezilla --show 95925d5f

ssh -L 2222:dgx1.idlab.uantwerpen.be:22 -N username@bastion.ilabt.imec.be

filezilla sftp://MGNO5Y6P@localhost:2222

$ gpulab-cli sftp --sftp --show 95925d5f

Short: sftp gpulab-95925d5f-proxy

Full: sftp -i /tmp/gpulab.pem -oPort=22 '-oProxyCommand=ssh -i /tmp/gpulab.pem username@bastion.ilabt.imec.be -W %h:%p' MGNO5Y6P@dgx1.idlab.uantwerpen.be

On FileZilla and proxy-support

FileZilla has no built-in support to connect to a host via a SSH tunnel proxy.

As you can see from the output of $ gpulab-cli sftp --filezilla --show 95925d5f, we solve this by first setting

up a tunnel to a port on localhost. In the example above, the ssh command sets up a tunnel on port 2222 of your

local machine, and forwards traffic to port 22 on dgx1.idlab.uantwerpen.be with the help of our proxy host

bastion.ilabt.imec.be.

While this command is running, you can then start a FileZilla-session which connects to the job via this tunnel. Once the FileZilla process stops, the GPULab CLI will automatically stop the SSH tunnel.

Interactive jobs¶

When you’re preparing a job, you don’t always know the command you want to run yet, or the script you want to run is not yet ready.

In these cases, you want to test things out inside a GPULab container, but don’t want to container to stop when the command stops.

You can start a job with sleep 600 as command, and log in with gpulab-cli ssh, GPULab also has a specific command for this: gpulab-cli interactive.

gpulab-cli interactive requires you to specify the docker image, the duration and your project:

gpulab-cli interactive --project my-project --duration-minutes 10 --docker-image debian:stable

This will start a job, which waits for 10 minutes before stopping.

GPULab also automatically connects over ssh, so that you can run commands.

Inside the job, you’ll find a file /running. If you delete it, the job will stop (you can also cancel the job using gpulab-cli cancel from another terminal).

An example:

~ $ gpulab-cli --dev interactive --project my-project --duration-minutes 10 --docker-image debian:stable

30c1247c-b1f3-11e9-93a1-2f2d78d6eb89

2019-07-29 13:22:46 +0200 - - Waiting for Job to start running...

2019-07-29 13:22:48 +0200 - 2 seconds - Job is in state STARTING

2019-07-29 13:22:50 +0200 - 4 seconds - Job is in state RUNNING

2019-07-29 13:22:50 +0200 - 4 seconds - Job is now running

root@86b0f7321a8f:/# ls

bin dev home lib64 mnt proc root running srv tmp var

boot etc lib media opt project run sbin sys usr

root@86b0f7321a8f:/# rm /running

root@86b0f7321a8f:/# Connection to n085-02.wall2.ilabt.iminds.be closed.

wim@tolstoy ~ $

If you log out of the ssh session (without removing /running), the job does not stop, and you can reconnect to it using gpulab-cli ssh.

Check gpulab-cli interactive --help for options that let you specify the cluster ID, the number of CPUs and GPUs, the amount of memory, and more.